3-Sigma (3σ) Testing

No two examples of the same product are perfectly identical. Manufacturing tolerances ensure that there will be variations in the breaking strength of different "identical" items - that is unavoidable. The manufacturer should take this into account.

The best way would be to pull-test (to failure) every device that they produce and then report all the results. This is impractical and would not leave any products for sale, so they pull-test a smaller sample.

Testing a sample and reporting the average sounds reasonable at first glance. The average strength of a random sample taken from a production run is an unbiased estimate of the average for the entire run (or near enough in practice that the difference does not matter[1]), but it does not tell us how much variation there is in the breaking strength. About half the samples will break below the average, and since we mostly care about the minimum strength, we need to know how far below.

Statisticians can calculate a sample standard deviation "s" that is a measure of this variation. They assume that this is an unbiased estimate of the standard deviation "s" for all items, tested or not. While not precisely true in all cases, in practice this is close enough not to matter, provided they test enough samples. The manufacturer can then use this to calculate a minimum breaking strength that most items from their production run will exceed.

Companies and standard organizations have agreed to calculate the minimum breaking strength by subtracting 3 times s from the average. This is known as “3-Sigma (3σ) Testing.”

Statisticians can calculate a sample standard deviation “s” that is a measure of this variation. They assume that this is an unbiased estimate of the standard deviation “σ” for all items, tested or not. While not precisely true in all cases, in practice this is close enough not to matter, provided they test enough samples. The manufacturer can then use this to calculate a minimum breaking strength that most items from their production run will exceed.

Some companies and standard organizations have agreed to calculate the minimum breaking strength by subtracting 3 times s from the average. This is known as “3-Sigma (3σ) Testing.”

You may hear that 99.9% of the samples will break at or above the 3σ breaking strength. That is not true. The 99.9% number comes from what mathematicians call a “Normal” (or “Gaussian”) distribution. Breaking strength cannot follow a Normal distribution[2]. The percentage may be high, but do not expect it to be 99.9%. More important, σ is unknown so the calculation uses s instead. This adds uncertainty. Companies can reduce the uncertainty by testing a lot of samples. It is hard to say how many[3], but at least 20 or 30 would be a good guess to start with.

You may hear that 99.9% of the samples will break at or above the 3σ breaking strength. That is not true. The 99.9% number comes from what mathematicians call a “Normal” (or “Gaussian”) distribution. Breaking strength cannot follow a Normal distribution[2]. The percentage may be high, but do not expect it to be 99.9%. More important, σ is unknown so the calculation uses s instead. This adds uncertainty. Companies can reduce the uncertainty by testing a lot of samples. It is hard to say how many[3], but at least 20 or 30 would be a good guess to start with.

Why 3σ? After all, physicists use “5σ” as the acceptable limit just to publish a paper, and we are interested in something with life-and-death consequences? One assumes that we will not load our device to anything close to the minimum breaking strength, so we will be fine.

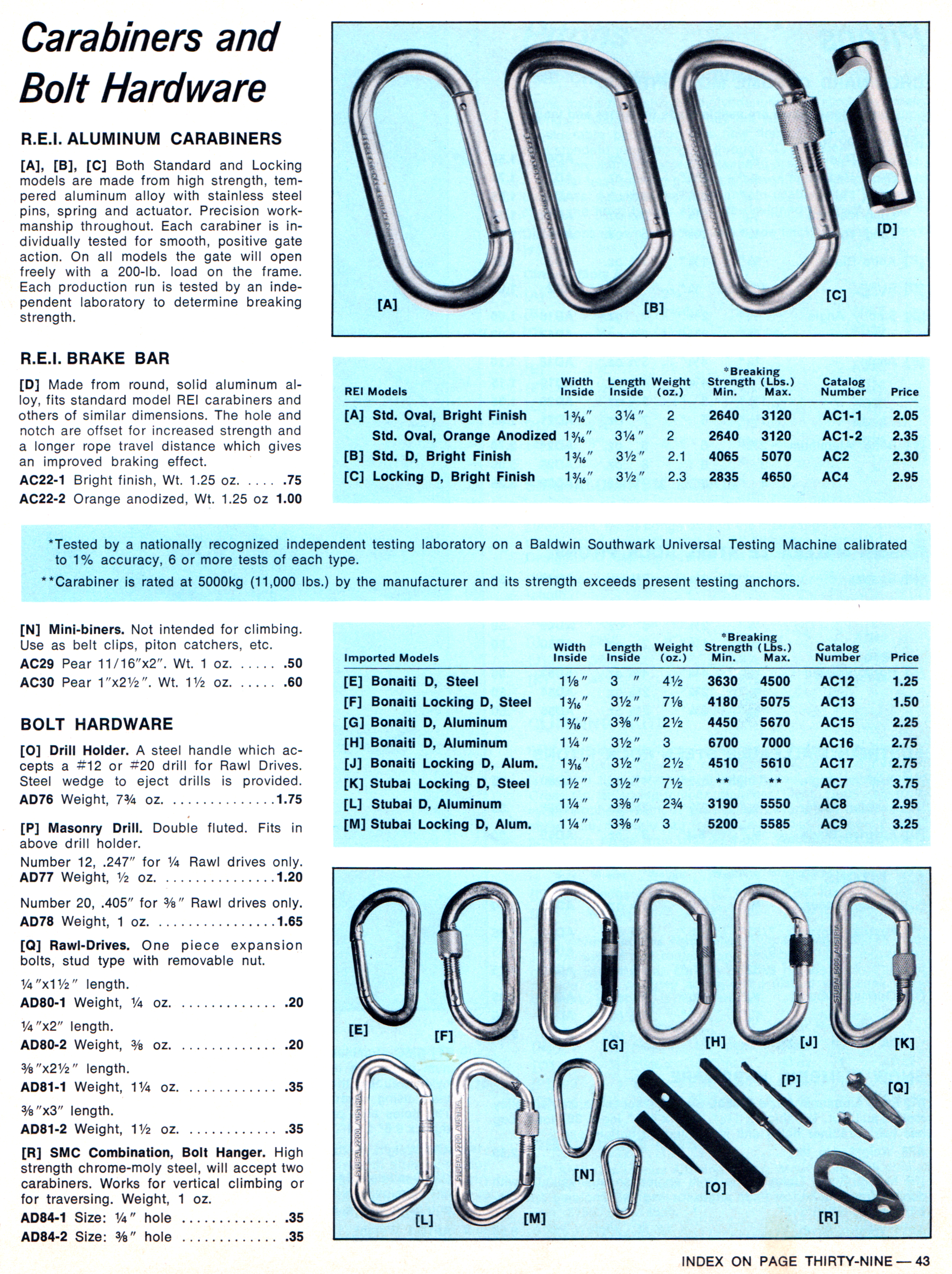

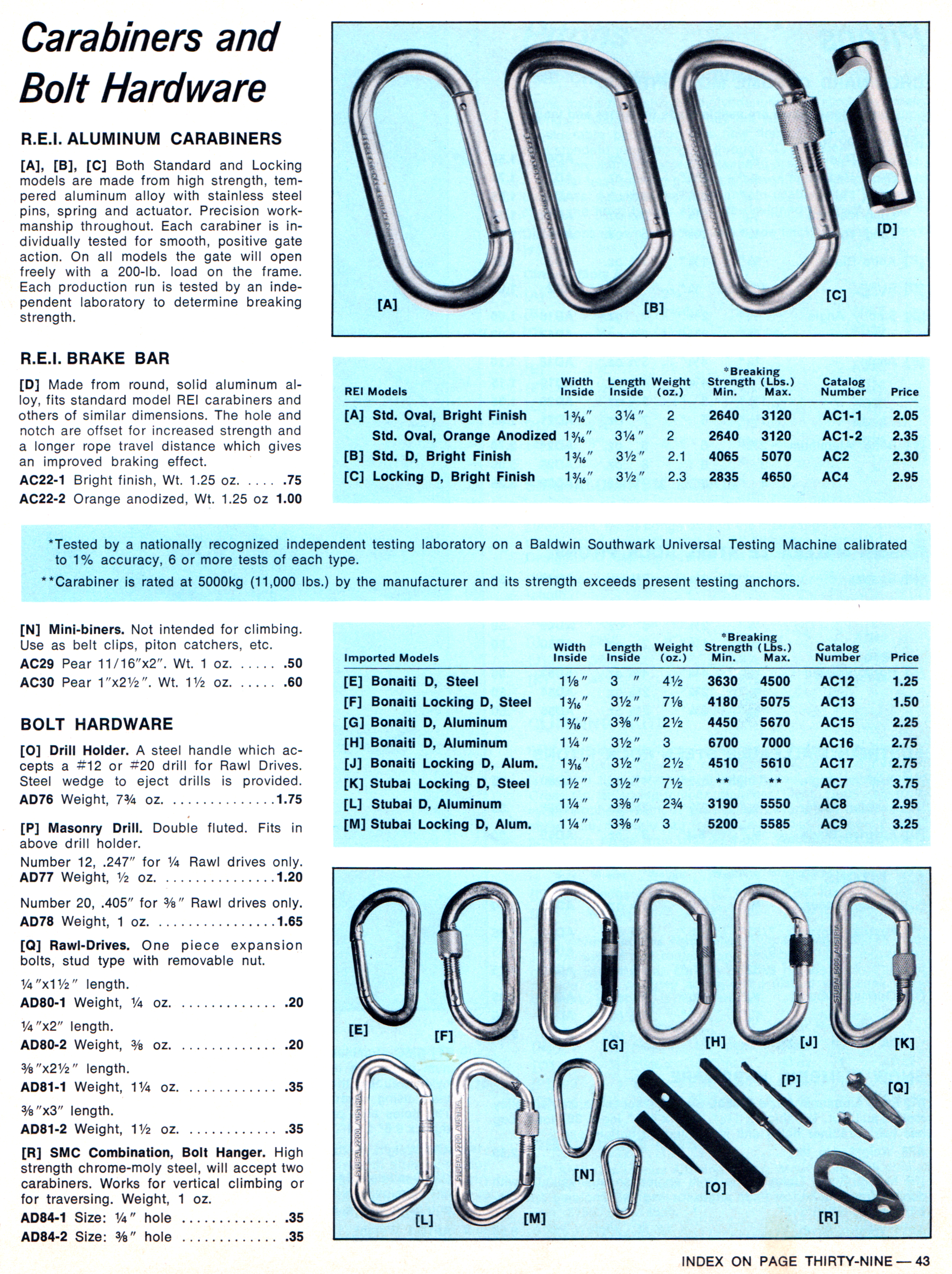

Telling me a minimum 3σ breaking strength without telling me how many they tested and what the average was does not give me any information on how much confidence I can justify having in the result[4]. My personal preference would be to see companies report the highest (or average) and lowest breaking strength as well as how many samples were tested. REI used to do this, as this page from their 1972 catalog shows.

Fortunately, most climbing gear is so strong that the actual strength does not really matter. If we are using gear near it’s published breaking strength, we are knowingly taking a significant and unknown risk, and we know it.

End Notes:

[1] Contrary to some online reports, outliers do not greatly skew the average, provided we test a lot of samples.

[2] For example, breaking strength is always positive. Assuming a Normal distribution is useful but may be wildly inaccurate, especially far from the average.

[3] It depends on the unknown distribution of the breaking strengths.

[4] This brings in the whole subject of confidence intervals. I won’t discuss those here.